Remember the 90s, when No Fear stuff was cool, and when people still said "cool"?

Well, a new paper has brought No Fear back, by reporting on a woman who has no fear - due to brain damage. The article, The Human Amygdala and the Induction and Experience of Fear, is brought to you by a list of neuroscientists including big names such as Antonio Damasio (of Phineas Gage fame).

Well, a new paper has brought No Fear back, by reporting on a woman who has no fear - due to brain damage. The article, The Human Amygdala and the Induction and Experience of Fear, is brought to you by a list of neuroscientists including big names such as Antonio Damasio (of Phineas Gage fame).

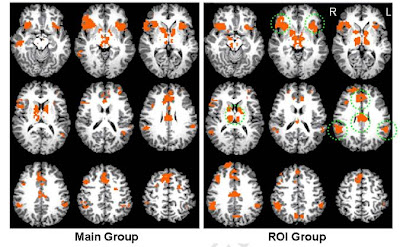

The basic story is nice and simple. There's a woman, SM, who lacks a part of the brain called the amygdala. They found that she can't feel fear. Therefore, it's reasonable to assume that the amygdala's required for fear. But there's a bit more to it than that...

The amygdala is a small nugget of the brain nestled in the medial temporal lobe. The name comes from the Greek for "almond" because apparently it looks like one, though I can't say I've noticed the resemblance myself.

What does it do? Good question. There are two main schools of thought. Some think that the amygdala is responsible for the emotion of fear, while others argue that its role is much broader and that it's responsible for measuring the "salience" or importance of stimuli, which covers fear but also much else.

That's where this new paper comes in, with the patient SM. She's not a new patient: she's been studied for years, and many papers have been published about her. I wonder if her acronym doesn't stand for "Scientific Motherlode"?

She's one of the very few living cases of Urbach-Wiethe disease, an extremely rare genetic disorder which causes selective degeneration of the amygdala as well as other symptoms such as skin problems.

Previous studies on SM mostly focussed on specific aspects of her neurological function e.g. memory, perception and so on. However there have been a few studies of her "everyday" experiences and personality. Thus we learned that:

Two experienced clinical psychologists conducted "blind" interviews of SM (the psychologists were not provided any background information)... Both reached the conclusion that SM expressed a normal range of affect and emotion... However, they both noted that SM was remarkably dispassionate when relating highly emotional and traumatic life experiences... To the psychologists, SM came across as a "survivor", as being "resilient" and even "heroic".

These observations were based on interviews under normal conditions; what would happen if you actually went out of your way to try and scare her? So they did.

First, they took her to an exotic pet store and got her to meet various snakes and spiders. She was perfectly happy picking up the various critters and had to be prevented from getting too closely acquainted with the more dangerous ones.

What's fascinating is that before she went to the store, she claimed to hate snakes and spiders! Why? Before she developed

Urbach-Wiethe disease, she had a normal childhood up to about the age of 10. Presumably she

used to be afraid of them, and just never updated this belief, a great example of how our own

narratives about our feelings can

clash with our real feelings.

They subsequently confirmed that SM was fearless by taking her to a "haunted asylum" (check it out, even the

website is scary) and showing her various horror movie clips, as well as through interviews with herself and her son. They also describe an incredible incident from several years ago: SM was walking home late at night when she saw

A man, whom SM described as looking “drugged-out.” As she walked past the park, the man called out and motioned for her to come over. SM made her way to the park bench. As she got within arm’s reach of the man, he suddenly stood up, pulled her down to the bench by her shirt, stuck a knife to her throat, and exclaimed, “I’m going to cut you, bitch!”

SM claims that she remained calm, did not panic, and did not feel afraid. In the distance she could hear the church choir singing. She looked at the man and confidently replied, “If you’re going to kill me, you’re gonna have to go through my God’s angels first.” The man suddenly let her go. SM reports “walking” back to her home. On the following day, she walked past the same park again. There were no signs of avoidance behavior and no feelings of fear.

All this suggests that the amygdala has a key role in the experience of fear, as opposed to other emotions: there is no evidence to suggest that SM lacks the ability to experience happiness or sadness in the same way.

So this is an interesting contribution to the debate on the role of the amygdala, although we really need someone to do equally detailed studies on other Urbach-Wiethe patients to make sure that it's not just that SM happens to be unusually brave for some other reason. What's doubly interesting, though, is that Ralph Adolphs, one of the authors, has

previously argued against the view of the amygdala as a "fear center".

Links: I've previously written about the psychology of horror movies and I've reviewed quite a lot of them too. Justin S. Feinstein, Ralph Adolphs, Antonio Damasio,, & and Daniel Tranel (2010). The Human Amygdala and the Induction and Experience of Fear Current Biology

Justin S. Feinstein, Ralph Adolphs, Antonio Damasio,, & and Daniel Tranel (2010). The Human Amygdala and the Induction and Experience of Fear Current Biology The headline news is that it increased: the overall rate of people treated for some form of "depression" went from 2.37% to 2.88% per year. That's an increase of 21%, which is not trivial, but it's much less than the increase in the previous decade: it was just 0.73% in 1987.

The headline news is that it increased: the overall rate of people treated for some form of "depression" went from 2.37% to 2.88% per year. That's an increase of 21%, which is not trivial, but it's much less than the increase in the previous decade: it was just 0.73% in 1987.

07.50

07.50

wsn

wsn

Posted in

Posted in