A group of Italian psychiatrists claim to explain How Neuroscience and Behavioral Genetics Improve Psychiatric Assessment: Report on a Violent Murder Case.

The paper presents the horrific case of a 24 year old woman from Switzerland who smothered her newborn son to death immediately after giving birth in her boyfriend's apartment. After her arrest, she claimed to have no memory of the event. She had a history of multiple drug abuse, including heroin, from the age of 13.

The paper presents the horrific case of a 24 year old woman from Switzerland who smothered her newborn son to death immediately after giving birth in her boyfriend's apartment. After her arrest, she claimed to have no memory of the event. She had a history of multiple drug abuse, including heroin, from the age of 13.

Forensic psychiatrists were asked to assess her case and try to answer the question of whether

"there was substantial evidence that the defendant had an irresistible impulse to commit the crime." The paper doesn't discuss the outcome of the trial, but the authors say that in their opinion she exhibits a pattern of

"pathologically impulsivity, antisocial tendencies, lack of planning...causally linked to the crime, thus providing the basis for an insanity defense."But that's not all. In the paper, the authors bring neuroscience and genetics into the case in an attempt to provide

a more “objective description” of the defendant’s mental disease by providing evidence that the disease has “hard” biological bases. This is particularly important given that psychiatric symptoms may be easily faked as they are mostly based on the defendant’s verbal report.

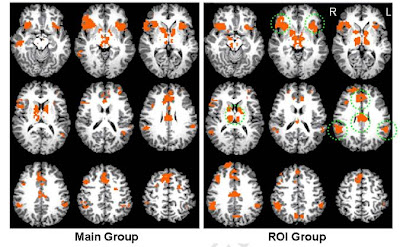

So they scanned her brain, and did DNA tests for 5 genes which have been previously linked to mental illness, impulsivity, or violent behaviour. What happened? Apparently her brain has

"reduced gray matter volume in the left prefrontal cortex" - but that was compared to just

6 healthy control women. You really can't do this kind of analysis on a single subject, anyway.

As for her genes, well, she had genes. On the famous and much-debated

5HTTLPR polymorphism, for example, her genotype was long/short; while it's true that short is generally considered the "bad" genotype, something like 40% of white people, and an even higher proportion of East Asians, carry it. The situation was similar for the other four genes

(STin2 (SCL6A4), rs4680 (COMT), MAOA-uVNTR, DRD4-2/11, for gene geeks).

I've previously posted about cases in which a well-defined disorder of the brain led to criminal behaviour. There was

the man who became obsessed with child pornography following surgical removal of a tumour in his right temporal lobe. There are

the people who show "sociopathic" behaviour following fronto-temporal degeneration.

However

this woman's brain was basically "normal" at least as far as a basic MRI scan could determine. All the pieces were there. Her genotypes was also normal in that lots of normal people carry the same genes; it's not (as far as we know) that she has a rare genetic mutation like

Brunner syndrome in which an important gene is entirely missing. So I don't think neurobiology has much to add to this sad story.

*

We're willing to excuse perpetrators when there's a straightforward "biological cause" for their criminal behaviour: it's not their fault, they're ill. In all other cases, we assign blame: biology is a valid excuse, but nothing else is.

There seems to be a basic difference between the way in which we think about "biological" as opposed to "environmental" causes of behaviour. This is related, I think, to the

Seductive Allure of Neuroscience Explanations and our fascination with brain scans that

"prove that something is in the brain". But when you start to think about it, it becomes less and less clear that this distinction works.

A person's family, social and economic background is the strongest known predictor of criminality. Guys from stable, affluent families rarely mug people; some men from poor, single-parent backgrounds do. But muggers don't choose to be born into that life any more than the child-porn addict chose to have brain cancer.

Indeed, the mugger's situation is a

more direct cause of his behaviour than a brain tumour. It's not hard to see how a mugger becomes,

specifically, a mugger: because they've grown up with role-models who do that; because their friends do it or at least condone it; because it's the easiest way for them to make money.

But it's less obvious how brain damage

by itself could cause someone to seek child porn. There's no child porn nucleus in the brain. Presumably, what it does is to remove the person's capacity for self-control, so they can't stop themselves from doing it.

This fits with the fact that people who show criminal behaviour after brain lesions often start to eat and have (non-criminal) sex uncontrollably as well. But that raises the question of why they want to do it in the first place. Were they, in some sense, a pedophile all along? If so, can we blame them for that?

Rigoni D, Pellegrini S, Mariotti V, Cozza A, Mechelli A, Ferrara SD, Pietrini P, & Sartori G (2010). How neuroscience and behavioral genetics improve psychiatric assessment: report on a violent murder case. Frontiers in behavioral neuroscience, 4 PMID: 21031162

Rigoni D, Pellegrini S, Mariotti V, Cozza A, Mechelli A, Ferrara SD, Pietrini P, & Sartori G (2010). How neuroscience and behavioral genetics improve psychiatric assessment: report on a violent murder case. Frontiers in behavioral neuroscience, 4 PMID: 21031162 The headline news is that it increased: the overall rate of people treated for some form of "depression" went from 2.37% to 2.88% per year. That's an increase of 21%, which is not trivial, but it's much less than the increase in the previous decade: it was just 0.73% in 1987.

The headline news is that it increased: the overall rate of people treated for some form of "depression" went from 2.37% to 2.88% per year. That's an increase of 21%, which is not trivial, but it's much less than the increase in the previous decade: it was just 0.73% in 1987.

07.50

07.50

wsn

wsn

Posted in

Posted in