What's your anti-drug? Well, it might well be hemopressin. At least, that's probably your anti-marijuana.

What's your anti-drug? Well, it might well be hemopressin. At least, that's probably your anti-marijuana.

Hemopressin is a small protein that was discovered in the brains of rodents in 2003: its name comes from the fact that it's a breakdown product of hemoglobin and that it can lower blood pressure.

No-one seems to have looked to see whether hemopressin is found in humans, yet, but it seems very likely. Almost everything that's in your brain is in a mouse's brain, and vice versa.

Pharmacologically, hemopressin's literally an anti-marijuana molecule: it's an inverse agonist at CB1 receptors, which are the ones targeted by the psychoactive compounds in marijuana, and also by the neurotransmitters known as endocannabinoids. Cannabinoids turn CB1 receptors on, hemopressin turns them off.

Artificial CB1 blockers were developed as weight loss drugs, and one of them, rimonabant, made it onto the market - but it was banned after it turned out that it caused depression and anxiety in many people.

So hemopressin is Nature's rimonabant: in which case, it ought to do what rimonabant does, which is to reduce appetite. And indeed a Journal of Neuroscience paper just out from Godd et al shows that it does just that, in rats and mice: injections of hemopressin reduced feeding.

Interestingly, this worked even when it was injected by the standard route under the skin - many proteins can't enter the brain if they're given this way, because they can't cross the blood-brain barrier, meaning that they have to be injected directly into the brain, which makes researching them much harder. So hemopressin, with any luck, will be pretty easy to study. Any volunteers for the first human trial...?![]() Dodd, G., Mancini, G., Lutz, B., & Luckman, S. (2010). The Peptide Hemopressin Acts through CB1 Cannabinoid Receptors to Reduce Food Intake in Rats and Mice Journal of Neuroscience, 30 (21), 7369-7376 DOI: 10.1523/JNEUROSCI.5455-09.2010

Dodd, G., Mancini, G., Lutz, B., & Luckman, S. (2010). The Peptide Hemopressin Acts through CB1 Cannabinoid Receptors to Reduce Food Intake in Rats and Mice Journal of Neuroscience, 30 (21), 7369-7376 DOI: 10.1523/JNEUROSCI.5455-09.2010

This Is Your Brain's Anti-Drug

08.10

08.10

wsn

wsn

fMRI In 1000 Words

06.48

06.48

wsn

wsn

I thought I'd write a short and simple intro to how fMRI works. Most such explanations start with the physics of Magnetic Resonance Imaging and eventually explain how it lets you look at brain activity. I'm doing it the other way round, because I like brains more than physics.

So - everyone knows that fMRI is a way of measuring neural activation. But what does it mean for a neuron to be active? All brain cells are "active": they're alive, firing electrical action potentials, and sending out neurotransmitters to other cells at synapses. If a certain cell gets more activated, that means that it's firing action potentials faster, or sending out more chemical signals. It's mostly synaptic activity which fMRI picks up.

How do you measure neural activation? You can do it directly by sticking in an electrode to measure action potentials, or use a glass tube to measure neurotransmitter levels. You can put electrodes on the scalp to pick up the electrical fields created by lots of neurons firing. But fMRI relies on an indirect approach: when a brain cell is firing hard, it uses more energy than when it's not.

Cells make energy from sugar and oxygen; oxygen is transported in the blood. So when a given cell is working hard, it uses more oxygen, and the oxygen content of nearby blood falls. Synaptic activity, in particular, uses loads of oxygen. So you might expect that highly active parts of the brain would have less oxygen. Counter-intuitively, they actually show an increase in blood oxygen, which is probably a kind of "overcompensation" for the activity (although there may be an "initial dip" in oxygen, it's very brief.)

So blood oxygen is a proxy for activation. How do you measure it? Oxygen in blood binds to haemoglobin, a protein that contains iron (which is why blood is red, like rust, and tastes metallic...like iron). By a nice coincidence, haemoglobin with oxygen is red; haemoglobin without oxygen is blueish or purple. This is why your veins, containing deoxygenated blood, are blue and why you turn blue if you're suffocating.

You could measure neural activity by literally looking to see how red the brain is. This is actually possible, but obviously it's a bit impractical. Luckily, as well as being blue, deoxygenated haemoglobin acts as a magnet. So blood is magnetic, and the strength of its magnetic field depends on how oxygenated it is. That's really useful, but how do you measure those magnetic fields?

Using an extremely strong magnet - like the liquid-helium-cooled superconducting coil at the heart of every MRI scanner, for example - you can make some of the protons in the body align in a special way. If you then fire some radio waves at these aligned protons, they can absorb them ("resonate"). As soon as you stop the radio waves, they'll release them back at you, like an echo - which is why the most common form of fMRI scan is called Echo-Planar Imaging (EPI). All matter contains protons; in the human body, most of them are found in water.

Each proton only responds to a specific frequency of radio waves. This frequency is determined by the strength of the magnetic field in which it sits - stronger fields, higher frequencies. Crucially, the magnetic fields surrounding deoxygenated blood therefore shift the radio frequency at which nearby protons respond. Suppose a certain bit of the brain resonates at frequency X. If some deoxygenated blood appears nearby, it will stop them from responding to that frequency - by making them respond to a different one.

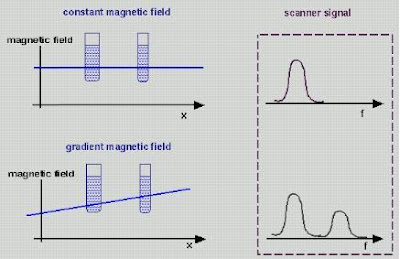

fMRI is essentially a way of measuring the degree to which protons in each part of the brain don't respond at the "expected" resonant frequency X, due to interference from nearby deoxygenated haemoglobin. But how do you know what resonant frequency to expect? This is the clever bit: simply by varying the magnetic field across different parts of the brain.

Say you make the magnetic field at the left side of the head slightly stronger than the one at the right - a magnetic gradient. The resonant frequency will therefore vary across the head: the further left, the higher the frequency. This is what the "gradient coils" in an MRI machine do. Gradient coils therefore translate spatial location into magnetic field strength. And as we know, magnetic field strength = resonant frequency. So spatial location = magnetic field strength = resonant frequency. All you then need to do is to hit the brain with a burst of radio waves of all different frequencies - a kind of white noise called the "RF Pulse" - and record the waves you get back.

Gradient coils therefore translate spatial location into magnetic field strength. And as we know, magnetic field strength = resonant frequency. So spatial location = magnetic field strength = resonant frequency. All you then need to do is to hit the brain with a burst of radio waves of all different frequencies - a kind of white noise called the "RF Pulse" - and record the waves you get back.

The strength of the radio waves at a given frequency therefore corresponds to the amount of protons in the appropriate place - so you can work out the density of matter in the brain based on the frequencies you get. Also, different kinds of tissues in the body respond differently to excitation; bone responds differently to brain grey matter, for example. So you can build up an image of brain structure by using magnetic gradients.

Of course you can't scan the whole brain at once: you scan it in slices, divided up into roughly cubic units called voxels. Typically in fMRI these are 3x3x3 mm or so, but they can be much smaller for specialized applications. The smaller the voxels, the longer the scan takes because it requires more gradient shifting. The loud noises that occur during MRI scans are caused by the gradient coils changing the gradients extremely quickly in order to scan the whole brain. Modern scanners typically image the whole brain once every 3 seconds, but you can go even faster.

As we've seen, deoxygenated blood degrades the image nearby, in what's called the Blood Oxygenation Level Dependent (BOLD) response. Neural activation increases oxygen and literally makes the brain light up; you could, in theory, see the changes with the naked eye. In fact, they're tiny, and there is always a lot of background noise as well, so you need statistical analysis to determine which parts light up, and then map this onto the brain as colored blobs. But that's another story...

Do It Like You Dopamine It

14.40

14.40

wsn

wsn

Neuroskeptic readers will know that I'm a big fan of theories. Rather than just poking around (or scanning) the brain under different conditions and seeing what happens, it's always better to have a testable hypothesis. I just found a 2007 paper by Israeli computational neuroscientists Niv et al that puts forward a very interesting theory about dopamine. Dopamine is a neurotransmitter, and dopamine cells are known to fire in phasic bursts - short volleys of spikes over millisecond timescales - in response to something which is either pleasurable in itself, or something that you've learned is associated with pleasure. Dopamine is therefore thought to be involved in learning what to do in order to get pleasurable rewards.

I just found a 2007 paper by Israeli computational neuroscientists Niv et al that puts forward a very interesting theory about dopamine. Dopamine is a neurotransmitter, and dopamine cells are known to fire in phasic bursts - short volleys of spikes over millisecond timescales - in response to something which is either pleasurable in itself, or something that you've learned is associated with pleasure. Dopamine is therefore thought to be involved in learning what to do in order to get pleasurable rewards.

But baseline, tonic dopamine levels vary over longer periods as well. The function of this tonic dopamine firing, and its relationship, if any, to phasic dopamine signalling, is less clear. Niv et al's idea is that the tonic dopamine level represents the brain's estimate of the average availability of rewards in the environment, and that it therefore controls how "vigorously" we should do stuff.

A high reward availability means that, in general, there's lots of stuff going on, lots of potential gains to be made. So if you're not out there getting some reward, you're missing out. In economic terms, the opportunity cost of not acting, or acting slowly, is high - so you need to hurry up. On the other hand, if there's only minor rewards available, you might as well take things nice and slow, to conserve your energy. Niv et al present a simple mathematical model in which a hypothetical rat must decide how often to press a lever in order to get food, and show that it accounts for the data from animal learning experiments. The distinction between phasic dopamine (a specific reward) vs. tonic dopamine (overall reward availability) is a bit like the distinction between fear vs. anxiety. Fear is what you feel when something scary, i.e. harmful, is right there in front of you. Anxiety is the sense that something harmful could be round the next corner.

The distinction between phasic dopamine (a specific reward) vs. tonic dopamine (overall reward availability) is a bit like the distinction between fear vs. anxiety. Fear is what you feel when something scary, i.e. harmful, is right there in front of you. Anxiety is the sense that something harmful could be round the next corner.

This theory accounts for the fact that if you give someone a drug that increases dopamine levels, such as amphetamine, they become hyperactive - they do more stuff, faster, or at least try to. That's why they call it speed. This happens to animals too. Yet this hyperactivity starts almost immediately, which means that it can't be a product of learning.

It also rings true in human terms. The feeling that everything's incredibly important, and that everyday tasks are really exciting, is one of the main effects of amphetamine. Every speed addict will have a story about the time they stayed up all night cleaning every inch of their house or organizing their wardrobe. This can easily develop into the compulsive, pointless repetition of the same task over and over. People with bipolar disorder often report the same kind of thing during (hypo)mania.

What controls tonic dopamine levels? A really brilliantly elegant answer would be: phasic dopamine. Maybe every time phasic dopamine levels spike in response to a reward (or something which you've learned to associate with a reward), some of the dopamine gets left over. If there's lots of phasic dopamine firing, which suggests that the availability of rewards is high, the tonic dopamine levels rise.

Unfortunately, it's probably not that simple, as signals from different parts of the brain seem to alter tonic and phasic dopamine firing largely independently, and this would mean that tonic dopamine would only increase after a good few rewards, not pre-emptively, which seems unlikely. The truth is, we don't know what sets the dopamine tone, and we don't really know what it does; but Niv et al's account is the most convincing I've come across...![]() Niv Y, Daw ND, Joel D, & Dayan P (2007). Tonic dopamine: opportunity costs and the control of response vigor. Psychopharmacology, 191 (3), 507-20 PMID: 17031711

Niv Y, Daw ND, Joel D, & Dayan P (2007). Tonic dopamine: opportunity costs and the control of response vigor. Psychopharmacology, 191 (3), 507-20 PMID: 17031711

Posted in

animals,

drugs,

mental health,

papers

Posted in

animals,

drugs,

mental health,

papers

Commercialization vs. Medicalization

09.00

09.00

wsn

wsn

Suppose there was someone who's perfectly healthy, just stressed, or worried, or or unhappy.

Suppose there was someone who's perfectly healthy, just stressed, or worried, or or unhappy.

And suppose that, for whatever reason, they go see their doctor about their problems, they get a diagnosis of depression, or social anxiety disorder, or something, and a prescription for Prozac.

What's wrong with this picture? Well, it's a clear case of medicalization: because I made it up to be a good example of medicalization. But what's wrong with medicalization? The medicines themselves? Many people think so, but if you ask me, they're the least troublesome part of the process.

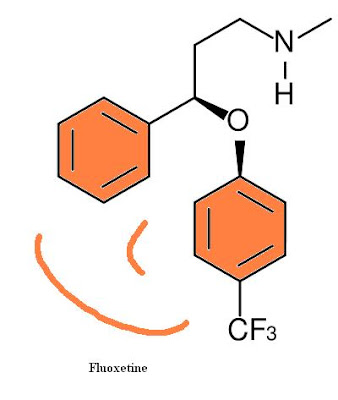

Drugs cost money, but not much: generic fluoxetine, i.e. non-brand-name Prozac, currently costs less than 10 cents per day. Drugs have side effects, but if our hypothetical person doesn't like the Prozac he or she's been prescribed, there's nothing stopping them from chucking it in the bin.

A diagnosis, on the other hand, is a lot harder to shake. In theory, one could get a second opinion from a different doctor and be declared perfectly healthy but in all my conversations with psychiatrists and patients I've never known of someone with a mental health diagnosis getting "undiagnosed" completely.

What's harmful about a mental health diagnosis? It changes the way you think about yourself, in many complicated ways. Just for one thing, it's likely to make you reconsider your past actions and ask if they were "really you", or whether they were caused by your illness.

Now, if you really are mentally ill, that is, if the diagnosis is accurate, this change will probably be a good thing; it might help you realize that with help, you can change, and avoid making the same mistakes you blame yourself for, for example. But if you're not ill, the same changes might be harmful.

A diagnosis invites you to think about problems through the lens of objective, impersonal analysis and treatment, what we might call the "clinical approach". The clinical approach is obviously the best one for most physical diseases. If you have cholera, you are ill, and you need to be diagnosed, and treated appropriately. Most people would agree that the clinical approach is also useful, albeit more problematic, in clear cut mental illnesses like schizophrenia, bipolar disorder, and (some cases of) depression.

But if your problem, or the root of your problems, is not that you're ill but that you're poor, or a victim of discrimination, or in the wrong job, or the wrong relationship, or you don't have either, etc. then a diagnosis is both futile, and quite possibly, actively harmful.

Futile because there's no disease to treat, and harmful because by situating that the origins of your problems are inside yourself (your neurochemistry, a "chemical imbalance"), it diverts attention from the real issues and the real solutions. Maybe you just need to change your situation, take a decision, get a new perspective, stop doing something.

Is there an answer? Many people want us to stop taking so many antidepressants: reverse the trend of medicalization, by reducing the number of pills we take. But there may be another way: commercialization.

Suppose that you didn't need a prescription to get Prozac: you just bought SSRIs over the counter, like aspirin, whenever you felt like it. What would this mean? It might mean more people taking Prozac, although I'm not sure it would. But it would almost certainly change the way people think about antidepressants.

Commercializing SSRIs would, I think, mean that many SSRI users stopped seeing themselves as "psychiatric patients", or as the pills as cures for their "illnesses". Instead they'd see them more like aspirin, or coffee, or beer: something to help you "feel better", a nice thing to have in some circumstances, but not something that's going to solve all your problems. It would, in other words, prevent mentally healthy people from thinking of themselves as "mentally ill". With any luck, our hypothetical friend from the first paragraph would be one of them.

Of course, this would be no benefit if you think that the whole problem with Prozac is the actual drug, fluoxetine hydrochloride. But if, like me, you think fluoxetine hydrochloride is pretty benign compared to the idea of Prozac, it would be a good move. The good thing about commercialization is that it makes it easy to buy things without having to think about them.

Of course, this would be no benefit if you think that the whole problem with Prozac is the actual drug, fluoxetine hydrochloride. But if, like me, you think fluoxetine hydrochloride is pretty benign compared to the idea of Prozac, it would be a good move. The good thing about commercialization is that it makes it easy to buy things without having to think about them.You can easily take this argument too far, and if you do, you'll eventually arrive approximately here. Don't. Serious clinical depression and anxiety disorders are real, and people who suffer from them often need "prescription-strength" drugs, and more importantly, professional help rather than being left to self-treat, because the ability to take care of yourself is, almost by definition, impaired in mental illness.

But these people might benefit from the commercialization of mood as well. They'd no longer be seen as qualitatively different from everyone else, weird and unusual. It's like how if someone's got severe pain, and needs prescription-strength painkillers, that's no big deal, because hey, we've all taken aspirin for headaches.

Commercialization would be better than medicalization for other drugs too. Take flibanserin, the new drug for "Hypoactive Sexual Desire Disorder", a condition which, according to the drug company who make flibanserin, affects maybe 20% of women.

Whether flibanserin really boosts libido to any significant extent is unclear, but let's assume it does. Why not sell it over-the-counter? Give it a raunchy name, put it in a colorful box, and sell it in pharmacies next to the condoms. I can picture it now...

Now that would be pretty ridiculous. It would be a crass example of the commercialization of sexuality. But flibanserin already is - or at least, saying 20% of women ought to be taking it is. By selling it as a lifestyle product, instead of a medical treatment, its crassness would be obvious, and we'd just have lots of people taking flibanserin, instead of lots of people taking flibanserin and thinking of themselves as suffering from "Hypoactive Sexual Desire Disorder" i.e. a mental illness.

Now that would be pretty ridiculous. It would be a crass example of the commercialization of sexuality. But flibanserin already is - or at least, saying 20% of women ought to be taking it is. By selling it as a lifestyle product, instead of a medical treatment, its crassness would be obvious, and we'd just have lots of people taking flibanserin, instead of lots of people taking flibanserin and thinking of themselves as suffering from "Hypoactive Sexual Desire Disorder" i.e. a mental illness.Unfortunately, I rather doubt that this is going to happen any time soon, although if you go to many "3rd world" countries, you'll find antidepressants, and indeed most other drugs, on the pharmacy shelves for anyone to buy without a prescription. To Westerners, this might seem primitive. I'm not so sure.

Posted in

1in4,

antidepressants,

flibanserin,

mental health,

politics

Posted in

1in4,

antidepressants,

flibanserin,

mental health,

politics

Happiness Is Not A Fish You Can Eat

08.50

08.50

wsn

wsn

Wouldn't it be nice if you could improve your mental health just by eating more fish?

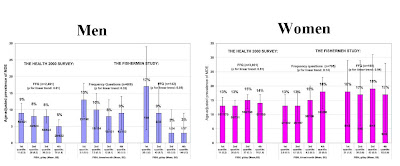

The authors looked at a large sample (total n=6,500) of Finnish people from the general population, and asked them questions about their diet, and their mood. They found a correlation between the amount of fish a person reported eating, and their likelihood of self-reporting depressive symptoms. However, this should be taken with a pinch of salt, a slice of lemon and a light cheese sauc...sorry. This should be taken with a pinch of salt, because it was only true in men, and it was only statistically significant using some measures of fish eating, not others.

To be fair, it's completely plausible that eating lots of them is good for you, because they are known to be involved in nerve cell function. And there are many papers finding them to be a good thing. But this study is not one of them. (The same authors also have another paper out finding no correlation between fish eating or omega 3 and "psychological distress", but it's largely overlapping data.)

The authors conclude that fish may be beneficial to mental health in men, albeit not through omega-3 fatty acids. They suggest that fish may instead provide some kind of high-quality protein or minerals. However, the other explanation is that fish is just correlated with depression because eating fish is a marker for some other lifestyle factors:

The observed association between high fish consumption and reduced risk for depressive episodes in the men may indicate complex associations between depression and lifestyle which we were not able to take into account. Diet and fish consumption may be a proxy for factors that have effect on mental well-being particularly in men. A plausible explanation is that fish consumption in men is a surrogate marker for some underlying but yet unidentified lifestyle factors that protect against depression.I think it's fair to say that the jury is still out on the benefits of omega 3's. As a vegetarian I don't eat fish and I have a history of depression and take not one, but two, antidepressants, so maybe I'm living proof that a lack of fish is a bad thing. I don't think so, though, as I took omega 3 supplements for a few months and felt no different. I gave up because they were costing me £30 a box.

Posted in

mental health,

papers,

woo

Posted in

mental health,

papers,

woo

Mice That Fight for Their Rights

15.10

15.10

wsn

wsn

Israeli biologists Feder et al report on Selective breeding for dominant and submissive behavior in Sabra mice. Mice are social animals and like many species, they show dominance hierarchies. When they first meet, they'll often fight each other. The winner gets to be Mr (or Mrs) Big, and they enjoy first pick of the food, mating opportunities, etc - for as long as they can remain dominant.

Mice are social animals and like many species, they show dominance hierarchies. When they first meet, they'll often fight each other. The winner gets to be Mr (or Mrs) Big, and they enjoy first pick of the food, mating opportunities, etc - for as long as they can remain dominant.

But what determines which mice become top dog... ? Feder et al show that it's partially under genetic control. They took a normal population of laboratory mice, paired them up, and made them battle for supremacy in a simple set-up in which only one mouse can get access to a central food supply: At first, only about 30% of pairs developed clear dominance/submission relationships, but the ones that did were selectively bred: dominant males mated with dominant females, and submissive males with submissive females. The offspring were put through the same process, and it was repeated.

At first, only about 30% of pairs developed clear dominance/submission relationships, but the ones that did were selectively bred: dominant males mated with dominant females, and submissive males with submissive females. The offspring were put through the same process, and it was repeated.

The results were dramatic: After 4 generations of successive selection, 80% of the pairs showed clear dominance and submission behaviour. And with each generation of breeding, the dominance relationships appeared faster, and stronger: at first the winners only got slightly more access to the food, but by the 4th generation, they almost completely monopolized it. As expected the mice bred to be dominant were overwhelmingly more likely to end up on top. The differences were not due to general differences in activity levels or anxiety. But the naturally timid mice could be made to fight for their rights by treating them with antidepressants - after a month of imipramine, they were taking crap from no-one.

But the naturally timid mice could be made to fight for their rights by treating them with antidepressants - after a month of imipramine, they were taking crap from no-one.

Feder et al say that previous studies have also shown anti-submissive effects of antidepressants, while drugs used to treat mania reduce dominance. Anyone who's experienced a mood disorder will probably be able to relate to this: depressed people tend to feel like they belong at the bottom of the pecking order of life, while mania is classically associated with believing you're the greatest person in history.

So dominance and submission could provide a useful way of testing the effects of drugs on mood. If so, it would be useful, because current animal models of depression and antidepressants etc. mostly rely on putting animals in a glass of water and seeing how long they take to stop struggling...![]() Feder, Y., Nesher, E., Ogran, A., Kreinin, A., Malatynska, E., Yadid, G., & Pinhasov, A. (2010). Selective breeding for dominant and submissive behavior in Sabra mice Journal of Affective Disorders DOI: 10.1016/j.jad.2010.03.018

Feder, Y., Nesher, E., Ogran, A., Kreinin, A., Malatynska, E., Yadid, G., & Pinhasov, A. (2010). Selective breeding for dominant and submissive behavior in Sabra mice Journal of Affective Disorders DOI: 10.1016/j.jad.2010.03.018

Posted in

animals,

antidepressants,

drugs,

funny,

mental health,

papers

Posted in

animals,

antidepressants,

drugs,

funny,

mental health,

papers

Do Cats Hallucinate?

12.40

12.40

wsn

wsn

I have two cats. One is about four, and he is a psychopath. The other is sixteen - elderly, in cat terms - and I've recently noticed some changes in her behaviour.

But on top of that, she's started pausing in the middle of whatever she's doing and staring at empty corners, or walls. All cats sit down and gaze into space a lot of the time, but this is different - it happens in the middle of normal actions, like eating or walking around. What does this mean?

Could she be hallucinating? Hallucinations are unfortunately not uncommon in elderly people. Seeing and hearing things that aren't there is a major symptom of Alzheimer's, and other forms of dementia. Do cats get Alzheimer's? The internet says: yes. In terms of scientific research there doesn't seem to have been much, but a few studies have found Alzheimer's-like changes (amyloid-beta protein accumulation) in the brains of old cats. Whether these cause the same symptoms as they do in people is unclear, but, why not?

How would you know if an animal was hallucinating? They can't talk about it, and unlike say hunger or pain, they don't have specific ways of communicating it through body language or cries. A hallucinating animal would, presumably, react fairly normally to whatever it thought it saw or heard: so hallucinations would manifest as normal behaviours, but in inappropriate situations. Whether this is what's happening to my cat, I'm not sure, but again, it's possible.

A more philosophical issue is whether we can conclude that this kind of out-of-context behaviour means the animal is experiencing a hallucination. But this is really just the age old question of whether animals have conciousness at all. If they do, then they can presumably hallucinate: if you can be concious of sensations, you can be concious of false sensations.

For what its worth, my view is that animals, at any rate for mammals, are concious. Humans are (although technically we only know for sure that we personally are, and have to assume the same is true of others.) Mammalian brains are structured in a similar way to our own; they're made of the same cells; they use the same neurotransmitters and the same drugs interfere with them in the same ways; pretty much all of the brain regions are there, although the sizes differ.

There's of course a big difference between us and other mammals: we have language, and conceptual thinking, and so forth. But does conciousness depend on that? It seems unlikely, just because most of what we're concious of at any one time isn't anything to do with those specifically human things.

Right now, I'm concious of what I can see, what I can hear, what I can feel with my fingertips, and the thoughts I'm writing down. Only 1/4 of that (to put it crudely) is unique to humans. And I'm not always aware of thoughts or words; there are plenty of times when I'm only aware of sensations and perceptions.

Probably the closest we get to animal conciousness is in strong, primitive experiences like pain, panic and anger, in which we "take leave of our senses" - not meaning that we become unconscious, but that we temporarily stop being able to "think straight" i.e. like a human. That doesn't mean that animals spend all their time in some extreme emotional state, but it's harder for us to know what it's like to be a relaxed cat because generally when we're relaxed, we're thinking (or daydreaming, etc. Although who's to say cats don't? They dream, after all...)

Posted in

animals,

drugs,

philosophy

Posted in

animals,

drugs,

philosophy

Prozac and the Killer

05.55

05.55

wsn

wsn

Uh-oh, here's a troubling paper: Effects of selective serotonin reuptake inhibitors on motor neuron survival. According to Anderson et al,

According to Anderson et al,

Standard doses of fluoxetine (Prozac) and paroxetine (Paxil), two of the biggest-selling SSRI antidepressants, both had a devastating effect on the survival of mammalian nerve cells growing in a dish. Within just 24 hours, the antidepressants had caused over half the cells to expire, 85% of them in the case of Paxil. Take a look:Motor neurons were challenged with fluoxetine and paroxetine at clinically relevant doses ... In fluoxetine-treated motor neurons there was ~52% cell death while in paroxetine-treated cells there was 14% cell survival.... Both SSRIs decreased cell survival in a dose-dependent manner. This study is provocative enough to call for further in vivo studies.

Oh dear. Should we be worried for the safety of the nerves of everyone taking SSRIs? I'm not, for two reasons.

Oh dear. Should we be worried for the safety of the nerves of everyone taking SSRIs? I'm not, for two reasons.Reason #1 is that one of the authors on this paper was a Dr Amy Bishop, aka the University of Alabama Shooter. Three months ago, Bishop shot dead 3 colleagues and injured 3 others during a departmental meeting at the University of Alabama in Huntsville.

A blog called Shepherd and Black Sheep did some nice investigating into Bishop's work following the shootings. They pointed out that of the five authors on the SSRI paper, one was Amy Bishop, and the other four were her husband and three children. They're all listed as working for a "Cherokee Lab Systems", but

The website for Cherokee Labsystems -- www.cherokeelabsystems.com -- has a notice "Please stand by. We are currently updating our site and will be on-line shortly" and also shows the web address defaults to http://cherokeelabs.com/

According to the Wayback Machine this web address -- http://cherokeelabs.com/ -- was only active October 16, 2003 through January 30, 2005, but all the archived pages for that period show a website that relates to Cherokee Labrador dogs, not a genetic research laboratory.

Moreover, Googling with street view the claimed address for Cherokee Labsystems - 2103 McDowling Dr. SE, Huntsville, AL - shows a residential home...

Fortunately there's a second reason to doubt that SSRIs cause this kind of motor neuron toxicity in people: SSRIs don't cause this kind of motor neuron toxicity in people. If they did, everyone would collapse and/or die after popping a single Prozac.

That millions of people have been taking SSRIs for years and, whatever else may have happened to them, they are still standing, is proof they don't. The fact that a low dose of alcohol (0.08% i.e. just "legally drunk") also killed a large proportion of nerve cells in this study is more evidence that these results can't be extrapolated to humans. Antidepressants are in fact commonly used to help treat the pain resulting from neuropathic nerve damage.

It's always possible to explain away such differences. Maybe SSRIs can cause motor neurotoxicity in humans, but only at higher doses, or only after very long periods, or only in some people. Anything's possible. But given that the effects actually seen in this study - dramatic, rapid, and present at normal doses - never happen in humans, such possibilities are no more likely, in the light of these results, than they were before.

This is the serious lesson of this paper: if you've got data showing that something causes some effect in animal models or cell cultures, but it doesn't do that in real life, the simplest explanation is that there's something wrong with your model, or your data. Unfortunately, Anderson et al is by no means the only example of putting the experimental cart before the clinical horse...

Posted in

antidepressants,

bad neuroscience,

drugs,

papers

Posted in

antidepressants,

bad neuroscience,

drugs,

papers